Menu

Philosophical & Theoretical Essays

Are There Any Gods?

Spirit, Soul, and Mind

Ethics without Gods

Religion, Hypnosis, and Music

Atheism: Its Logical and Philosophical Foundations

Atheism and Humanism: Veridical and Ethical Dimensions of Self-description

Of Free Will and Flush Toilets

Fallacies for the Faith

ARE THERE ANY GODS?

TRADITIONAL ARGUMENTS EXAMINED,

WITH A CONSIDERATION OF

THE PROBLEM OF EVIL

I. The first-cause argument

According to the first-cause argument, everything in this world has to have a cause, and that cause in turn must have had its cause. If we follow this chain of causation back far enough, we must come ultimately to a First Cause, which must be God.

Critique: To say that "God is the cause of the world" immediately raises the question "What is the cause of that god?" If everything must indeed have a cause, then a god also must have a cause. If, however, anything can exist without a cause, it might just as well be the universe as God. If we are going to speculate that something can exist without a cause, it’s better for it to be something we actually know exists (the universe) rather than something we can’t even detect (a god).

Even If we allow the argument that "God" is the cause of the universe, that doesn't accomplish anything for the world's religions, since "God" is simply a synonym for "cause," and there is no way to know anything more about it other than it created the universe. In fact, we can't even know if "it" is an "it." It might have gender, as did the gods and goddesses of antiquity, or it might be some sort of animal (as were other deities of the past). It might be singular or plural. There is no more evidence that a single god created the world than that the world was created by a divine committee of some sort.

To conclude from the first-cause argument that a god exists is misleading, since the most that could be concluded if the argument were valid is that a causeexists. Furthermore, itshould be noted that modern physics has seriously undermined the concept of causation. In quantum mechanics and related areas, statistical concepts have replaced the concept of cause and effect. If the concept of cause is not needed in certain areas of physics, it also may not be needed at the level of the universe. Physicists tell us that “virtual particles” are popping into and out of existence all the time. Why not unverses also?

Finally, it is more plausible to suppose the universe has always existed (in some form or other) than that the only thing that has existed forever is something we can't even detect (a god). How long the "cosmic egg" existed before it exploded in the "Big Bang" that formed our universe is unknown and perhaps unknowable. To say that the Big Bang had to have had a cause and that cause is God gets us back to our first question: What was the cause of the cause of the Big Bang? If anything can exist without cause, it might Just as well be the Big Bang.

Critique: To say that "God is the cause of the world" immediately raises the question "What is the cause of that god?" If everything must indeed have a cause, then a god also must have a cause. If, however, anything can exist without a cause, it might just as well be the universe as God. If we are going to speculate that something can exist without a cause, it’s better for it to be something we actually know exists (the universe) rather than something we can’t even detect (a god).

Even If we allow the argument that "God" is the cause of the universe, that doesn't accomplish anything for the world's religions, since "God" is simply a synonym for "cause," and there is no way to know anything more about it other than it created the universe. In fact, we can't even know if "it" is an "it." It might have gender, as did the gods and goddesses of antiquity, or it might be some sort of animal (as were other deities of the past). It might be singular or plural. There is no more evidence that a single god created the world than that the world was created by a divine committee of some sort.

To conclude from the first-cause argument that a god exists is misleading, since the most that could be concluded if the argument were valid is that a causeexists. Furthermore, itshould be noted that modern physics has seriously undermined the concept of causation. In quantum mechanics and related areas, statistical concepts have replaced the concept of cause and effect. If the concept of cause is not needed in certain areas of physics, it also may not be needed at the level of the universe. Physicists tell us that “virtual particles” are popping into and out of existence all the time. Why not unverses also?

Finally, it is more plausible to suppose the universe has always existed (in some form or other) than that the only thing that has existed forever is something we can't even detect (a god). How long the "cosmic egg" existed before it exploded in the "Big Bang" that formed our universe is unknown and perhaps unknowable. To say that the Big Bang had to have had a cause and that cause is God gets us back to our first question: What was the cause of the cause of the Big Bang? If anything can exist without cause, it might Just as well be the Big Bang.

II. The argument from natural law

According to the argument from natural law, the existence of the laws of nature implies the existence of a law-giver—a god. Without this god creating and enforcing these laws, the world would be chaos. Without the law of gravity, for example, planets would be moving all over the place. A god is needed to keep the universe orderly.

Critique: First of all, this argument confuses human laws with natural laws. While it is true that there would be no human laws if there had been no law-givers such as Hammurabi or the United States Congress, so-called natural laws do not require a law-giver. "Natural laws" are simply statements of how in fact the universe is seen to act.

Secondly, the term "natural law" is used less and less now in science, being replaced by the term "theory.” Although we still speak of Newton's laws, those laws have been largely replaced by Einstein's theory. Since natural laws are simply descriptions of how in fact things operate, there is no need to suppose those things are being coerced into acting that way. Furthermore, we have already seen that in certain areas of science these so-called laws are actually statistical averages of the sort that derive from the "laws" of chance. A law-giver Is no more needed to explain them than to explain the fact that when we throw dice, double sixes can be expected about one out of every thirty-six throws.

Finally, even if we supposed that things in nature behave the way they do because they are obeying divine commands, we must then deal with the question "Why did God issue just those natural laws and not different ones?" The Nobel Prize-winning philosopher and logician Bertrand Russell explains:

"If you say that he did it simply from his own good pleasure, and without any reason, you then find that there Is something which is not subject to law, and so your train of natural law is interrupted. If you say, as more orthodox theologians do, that In all the laws which God issues he had a reason for giving those laws rather than others—the reason, of course, being to create the best universe, although you would never think it to look at it—if there were a reason for the laws which God gave, then God himself was subject to law, and therefore you do not get any advantage by introducing God as an Intermediary. You have really a law outside and anterior to the divine edicts, and God does not serve your purpose, because he is not the ultimate law-giver.

Critique: First of all, this argument confuses human laws with natural laws. While it is true that there would be no human laws if there had been no law-givers such as Hammurabi or the United States Congress, so-called natural laws do not require a law-giver. "Natural laws" are simply statements of how in fact the universe is seen to act.

Secondly, the term "natural law" is used less and less now in science, being replaced by the term "theory.” Although we still speak of Newton's laws, those laws have been largely replaced by Einstein's theory. Since natural laws are simply descriptions of how in fact things operate, there is no need to suppose those things are being coerced into acting that way. Furthermore, we have already seen that in certain areas of science these so-called laws are actually statistical averages of the sort that derive from the "laws" of chance. A law-giver Is no more needed to explain them than to explain the fact that when we throw dice, double sixes can be expected about one out of every thirty-six throws.

Finally, even if we supposed that things in nature behave the way they do because they are obeying divine commands, we must then deal with the question "Why did God issue just those natural laws and not different ones?" The Nobel Prize-winning philosopher and logician Bertrand Russell explains:

"If you say that he did it simply from his own good pleasure, and without any reason, you then find that there Is something which is not subject to law, and so your train of natural law is interrupted. If you say, as more orthodox theologians do, that In all the laws which God issues he had a reason for giving those laws rather than others—the reason, of course, being to create the best universe, although you would never think it to look at it—if there were a reason for the laws which God gave, then God himself was subject to law, and therefore you do not get any advantage by introducing God as an Intermediary. You have really a law outside and anterior to the divine edicts, and God does not serve your purpose, because he is not the ultimate law-giver.

III. The argument from the “anthropic principle”

An argument derived from the natural-law argument, this argument claims that the laws of nature are exactly those needed to create and sustain human beings. If the force of gravity, say, or the charge of the electron were slightly different, human life would be impossible. If water did not expand when it freezes, life itself would be impossible. If the laws of nature were slightly different, we would not be here. In fact, however, the laws of nature are exactly those needed for human existence. They must have been selected by a divine creator especially for the purpose of creating human beings.

Critique: This argument supposes, without evidence, that the so-called laws of nature couldbe different. As far as we can tell, the laws of nature are the way they are of necessity—they can’t be different. (Of course this cannot be proved, but we have no evidence to make us think otherwise.) Furthermore, it raises the question, "Why did God want to create humans?" As with the natural-law argument, If God wanted to create humans for no reason other than his own good pleasure, we have something not subject to natural law. If, however, he had a reason for the human-compatible laws he made, then once again we have a law outside and beyond God. Creating a god as the author of the anthropic principle does not really answer the question "Why are the laws of nature compatible with human life?"

Furthermore, it is not possible to show that sentient life of some sort could not exist In at least some other imaginable universes with different laws, and so the uniqueness of the human situation is lessened somewhat. However this may be, it must be observed that even though the laws of nature are compatible with human life, they seem to have produced humanity more by accident than by purpose. After all, somewhere between twelve and twenty billion years have elapsed since the time of the Big Bang, but humans of our kind have only existed during the last 250,000 years or so. If the purpose of the universe was to create human beings, it's creator seems to have been extremely lacking in motivation!

Ultimately, there is a striking similarity between the claim that all the laws of nature are the way they are just so wecan be where we are and the story of two men lost in the midst of a giant shopping mall. After wandering about for a while, they come upon an information kiosk with a map of the mall. They search the map and find an arrow labled “YOU ARE HERE.” One guy says to the other, “You know? This is amazing. Every time I get lost in a mall and find one of these signs, the arrow is always absolutely correct. I always amexactly where it says at the moment!”

Critique: This argument supposes, without evidence, that the so-called laws of nature couldbe different. As far as we can tell, the laws of nature are the way they are of necessity—they can’t be different. (Of course this cannot be proved, but we have no evidence to make us think otherwise.) Furthermore, it raises the question, "Why did God want to create humans?" As with the natural-law argument, If God wanted to create humans for no reason other than his own good pleasure, we have something not subject to natural law. If, however, he had a reason for the human-compatible laws he made, then once again we have a law outside and beyond God. Creating a god as the author of the anthropic principle does not really answer the question "Why are the laws of nature compatible with human life?"

Furthermore, it is not possible to show that sentient life of some sort could not exist In at least some other imaginable universes with different laws, and so the uniqueness of the human situation is lessened somewhat. However this may be, it must be observed that even though the laws of nature are compatible with human life, they seem to have produced humanity more by accident than by purpose. After all, somewhere between twelve and twenty billion years have elapsed since the time of the Big Bang, but humans of our kind have only existed during the last 250,000 years or so. If the purpose of the universe was to create human beings, it's creator seems to have been extremely lacking in motivation!

Ultimately, there is a striking similarity between the claim that all the laws of nature are the way they are just so wecan be where we are and the story of two men lost in the midst of a giant shopping mall. After wandering about for a while, they come upon an information kiosk with a map of the mall. They search the map and find an arrow labled “YOU ARE HERE.” One guy says to the other, “You know? This is amazing. Every time I get lost in a mall and find one of these signs, the arrow is always absolutely correct. I always amexactly where it says at the moment!”

IV. The argument from design

This is very similar to the anthropic principle argument. Not only the laws of nature were created just for humans, nature itself and the structures of the organisms in it carry the marks of a divine designer and were created for us. Just as a watch betrays the existence of a designing watchmaker, so too the human eye and the world of nature in general bespeak the existence of a divinely talented designer.

Critique: Ever since the time of Charles Darwin, we have understood that environments were not created to be compatible with the life-forms living in them. Rather, living things have evolved adaptations that allow them to live in the environments in which they are found. Thus, land vertebrates had aquatic ancestors that evolved adaptations that allowed them to live on land. Whales, on the other hand, had terrestrial ancestors that evolved adaptations to live in the water again!

The principles of mutation and natural selection are able to accomplish everything theologians once thought only a divine watchmaker could do—including the creation of the human eye. Since evolutionary processes are in effect a "blind watchmaker," we are not surprised to learn that the human eye is not "designed" as well as could be imagined if real intelligence were behind its creation. Not only are our retinas on backwards (the photoreceptor cells face toward the center of our heads instead of frontwards), the eyes of many of the rest of us are poorly shaped and we have to wear glasses. Although we may suppose our noses are divinely shaped so they can support the spectacles needed for clear eyesight, it is suspicious that the supposed Intelligence that created noses suitable for spectacles did not at the same time provide the spectacles. Once again, to quote Bertrand Russell:

"When you come to look into this argument from design, it is a most astonishing thing that people can believe that this world, with all the things that are in it, with all its defects, should be the best that omnipotence and omniscience have been able to produce in millions of years. I really cannot believe it. Do you think that, if you were granted omnipotence and omniscience and millions of years in which to perfect your world, you could produce nothing better than the Ku Klux Klan or the Fascists?"

Critique: Ever since the time of Charles Darwin, we have understood that environments were not created to be compatible with the life-forms living in them. Rather, living things have evolved adaptations that allow them to live in the environments in which they are found. Thus, land vertebrates had aquatic ancestors that evolved adaptations that allowed them to live on land. Whales, on the other hand, had terrestrial ancestors that evolved adaptations to live in the water again!

The principles of mutation and natural selection are able to accomplish everything theologians once thought only a divine watchmaker could do—including the creation of the human eye. Since evolutionary processes are in effect a "blind watchmaker," we are not surprised to learn that the human eye is not "designed" as well as could be imagined if real intelligence were behind its creation. Not only are our retinas on backwards (the photoreceptor cells face toward the center of our heads instead of frontwards), the eyes of many of the rest of us are poorly shaped and we have to wear glasses. Although we may suppose our noses are divinely shaped so they can support the spectacles needed for clear eyesight, it is suspicious that the supposed Intelligence that created noses suitable for spectacles did not at the same time provide the spectacles. Once again, to quote Bertrand Russell:

"When you come to look into this argument from design, it is a most astonishing thing that people can believe that this world, with all the things that are in it, with all its defects, should be the best that omnipotence and omniscience have been able to produce in millions of years. I really cannot believe it. Do you think that, if you were granted omnipotence and omniscience and millions of years in which to perfect your world, you could produce nothing better than the Ku Klux Klan or the Fascists?"

V. The argument from moral necessity

One form of this argument claims that if there were no god, there would be no difference between right and wrong. All would be permitted. A righteous god is needed to preserve morals.

Critique: Strictly speaking, this cannot prove the existence of anything, let alone a god. It can only frighten people into believing in a god for fear of losing their moral systems. The presence or absence of morality is logically unrelated to the question of whether one or seventy gods exist.

But there are further problems with the argument. If indeed there is a difference between right and wrong, is that difference due to a god's arbitrary fiat (command), or is there some inherent principle by which right and wrong can be distinguished? If it is simply a god's fiat or whim that makes right right and wrong wrong, then for the god him/her/its-self there is no difference between right and wrong, and it is meaningless to say that the god in question is good. If, however, a god's commandments are good independent of the fact that he/she/it made them, then it was not through that god that right and wrong came into being: they are logicallyindependent and separate from the god. There is then no reason to look to a god to understand good and bad. Goodand badwould then be principles that we can figure out without the need of a god.

Critique: Strictly speaking, this cannot prove the existence of anything, let alone a god. It can only frighten people into believing in a god for fear of losing their moral systems. The presence or absence of morality is logically unrelated to the question of whether one or seventy gods exist.

But there are further problems with the argument. If indeed there is a difference between right and wrong, is that difference due to a god's arbitrary fiat (command), or is there some inherent principle by which right and wrong can be distinguished? If it is simply a god's fiat or whim that makes right right and wrong wrong, then for the god him/her/its-self there is no difference between right and wrong, and it is meaningless to say that the god in question is good. If, however, a god's commandments are good independent of the fact that he/she/it made them, then it was not through that god that right and wrong came into being: they are logicallyindependent and separate from the god. There is then no reason to look to a god to understand good and bad. Goodand badwould then be principles that we can figure out without the need of a god.

VI. The argument from prophecy

This argument claims that prophets in the Old and New Testaments of the Christian Bible made scores of prophecies that were fulfilled centuries later, long after the prophets had died. Only if a god had given them supernatural foresight could they have done that. Thus, the great accuracy of his prophets proves the existence of the Christian god.

Critique: This argument is based upon the astonishing assumption that when the prophets were speaking to the people of their times, they weren't really speaking to them! Thus, when Jesus said "I tell you this: the present generation will live to see it all. Heaven and earth will pass away ... " [Matt24:34-35], he wasn't speaking to his own generation. (Imagine their disappointment!) When he said "I tell you this: there are some of those standing here who will not taste death before they have seen the kingdom of God already come in power" [Mark 9:1], he wasn't really talking to the people standing before him! Jesus apparently was only fooling those people into thinking he was talking to them, if we are to believe the argument from prophecy.

But there are other problems with the argument from prophecy, foremost of which is the simple fact that prophecy frequently fails. Not only was Jesus wrong in the two prophecies just quoted, we have prophecy failing in the Old Testament as well. Ezekiel [chapter 29] prophesied that Nebuchadnezzar, King of Babylon, would conquer Egypt and destroy and disperse its people; that Egypt would remain uninhabited for forty years; and that the Nile would dry up. Of course, none of this came true.

Worse than the failed prophecies are the falsified prophecies—prophecies written after the events in question had already become history. Scholars have shown, for example, that the Book of Daniel is a forgery written centuries after the time of the Babylonian captivity (597–538 B.C.E.), probably around the year 165 B.C.E. The prophecies In Daniel that actually came true were already history to its author.

The divine inspiration of the Judaeo-Christian scriptures—and thus the existence of a divinity inspiring them—is further called into question by the great numbers of contradictions in those scriptures. Thus, Daniell: 1:2 says that it was King Jehoiakim who was carried off into captivity by Nebuchadnezzar; 2 Kings 24:6-12 says Jehoiakim was already dead and it was his son, Jehoiachin, who was taken captive.

Critique: This argument is based upon the astonishing assumption that when the prophets were speaking to the people of their times, they weren't really speaking to them! Thus, when Jesus said "I tell you this: the present generation will live to see it all. Heaven and earth will pass away ... " [Matt24:34-35], he wasn't speaking to his own generation. (Imagine their disappointment!) When he said "I tell you this: there are some of those standing here who will not taste death before they have seen the kingdom of God already come in power" [Mark 9:1], he wasn't really talking to the people standing before him! Jesus apparently was only fooling those people into thinking he was talking to them, if we are to believe the argument from prophecy.

But there are other problems with the argument from prophecy, foremost of which is the simple fact that prophecy frequently fails. Not only was Jesus wrong in the two prophecies just quoted, we have prophecy failing in the Old Testament as well. Ezekiel [chapter 29] prophesied that Nebuchadnezzar, King of Babylon, would conquer Egypt and destroy and disperse its people; that Egypt would remain uninhabited for forty years; and that the Nile would dry up. Of course, none of this came true.

Worse than the failed prophecies are the falsified prophecies—prophecies written after the events in question had already become history. Scholars have shown, for example, that the Book of Daniel is a forgery written centuries after the time of the Babylonian captivity (597–538 B.C.E.), probably around the year 165 B.C.E. The prophecies In Daniel that actually came true were already history to its author.

The divine inspiration of the Judaeo-Christian scriptures—and thus the existence of a divinity inspiring them—is further called into question by the great numbers of contradictions in those scriptures. Thus, Daniell: 1:2 says that it was King Jehoiakim who was carried off into captivity by Nebuchadnezzar; 2 Kings 24:6-12 says Jehoiakim was already dead and it was his son, Jehoiachin, who was taken captive.

VII. The problem of evil

This is actually an argument againstthe existence of a god who is good, omnipotent, and omniscient at the same time. It was first stated clearly by the Greek philosopher Epicurus, who lived 341-270 B.C.E.

Either God wants to abolish evil, and cannot;

Or he can, but does not want to;

Or he cannot, and does not want to.

If he wants to, but cannot, he is impotent.

If he can, but does not want to, he is wicked.

If he neither can, nor wants to,

He is both powerless and wicked.

But if (as they say) God can abolish evil,

And God really wants to do it,

Why is there evil In the world?

Put simply, this argument rules out the possibility that if there Is a god it is simultaneously good as well as omnipotent and omniscient. This effectively rules out the god of Christianity, but perhaps does not rule out the gods of certain other religions

Either God wants to abolish evil, and cannot;

Or he can, but does not want to;

Or he cannot, and does not want to.

If he wants to, but cannot, he is impotent.

If he can, but does not want to, he is wicked.

If he neither can, nor wants to,

He is both powerless and wicked.

But if (as they say) God can abolish evil,

And God really wants to do it,

Why is there evil In the world?

Put simply, this argument rules out the possibility that if there Is a god it is simultaneously good as well as omnipotent and omniscient. This effectively rules out the god of Christianity, but perhaps does not rule out the gods of certain other religions

VIII. The Argument Against Proving a Negative

BELIEVER: How do you know there is no god? What proof do you have?

ATHEIST: How do you know there is no Easter bunny? How do you know there is no Santa Claus? Have you disproved the existence of Thor and Osiris?

BELIEVER: Be serious! Those are just myths made up by men. I'm talking about God!

ATHEIST: Well, the burden of proof is on you to prove that a deity exists. I don't have to prove a negative. The burden of proof is always on the person who alleges the existence of something.

BELIEVER: I don't buy that. You have to prove that my God does not exist.

ATHEIST: Your god? Singular? How do you know there aren't lots of gods? Have you disproved the existence of goddesses?

BELIEVER: Don't be silly! I'm talking about the existence of God—the creator of the universe.

ATHEIST: Ah! Now we're getting somewhere! You're talking about me!

BELIEVER: Since when are you God?

ATHEIST: Since just a bit more than an infinite length of time. Of course, I created youjust three minutes ago.

BELIEVER: That's crazy! I'm fifty-seven years old!

ATHEIST: Of course you think you are: I created those memories in you, and I altered everyone else's memories also, to make it appear that you were around before three minutes ago.

BELIEVER: I suppose you created my birth certificate too! What evidence do you have to support such an absurd idea?

ATHEIST: Ah! So you're beginning to understand that the burden of proof is on the person who makes the claim of a god's existence. Don't you think you should try to disprove the claim that I am god?

BELIEVER: Well, maybe. If you're god, why don't you perform a miracle?

ATHEIST: Good question. Unfortunately, I don't do miracles anymore. I could if I wanted to, but I've decided that from now on, people have to believe in me through faith. Being God, I've just now read your mind and I see you're thinking that you might be able to torture me into confessing that I'm not God. Well, scrap that idea! I might very well decide to pretend to be in pain and confess all sorts of silly things. But believe me, I would punish you for eternity after you die!

BELIEVER: Hey, that's not a legitimate argumentation. There's nothing I could ever do to disprove your claim of divinity. You could always wriggle out of it by claiming you'll show me after I'm dead!

ATHEIST: Very true! You're learning how impossible it is to prove a negative. But you're learning one even more important lesson.

BELIEVER: What's that?

ATHEIST: You're learning that it is stupid to argue about propositions that can't be tested even in the imagination. For every test you could imagine to try, I could come up with a way to evade your net—in just the same way as the preachers tell me yourgod doesn't want to get involved in mytests. My claim to divinity can't be tested. Your claims of the divinity of Jehovah or Jesus can't be tested either. If I call upon your god to strike me with lightning if I'm wrong, I guarantee nothing will happen. Your god won't get involved any more than I will. Claims that can't be tested even in the imagination are meaningless. They can't even be false. We don't need to waste our time trying to disprove them. You aren't going to waste your time trying to disprove my claim to divinity, and no sane person will waste time trying to disprove the existence of your untestable god. Of course, when you accidentally make a claim about your divinity nominee that is testable, sane people might take the time to show you how the test results turn out to be negative. But in general, no one is going to waste time trying to prove that Jehovah and I are not gods.

ATHEIST: How do you know there is no Easter bunny? How do you know there is no Santa Claus? Have you disproved the existence of Thor and Osiris?

BELIEVER: Be serious! Those are just myths made up by men. I'm talking about God!

ATHEIST: Well, the burden of proof is on you to prove that a deity exists. I don't have to prove a negative. The burden of proof is always on the person who alleges the existence of something.

BELIEVER: I don't buy that. You have to prove that my God does not exist.

ATHEIST: Your god? Singular? How do you know there aren't lots of gods? Have you disproved the existence of goddesses?

BELIEVER: Don't be silly! I'm talking about the existence of God—the creator of the universe.

ATHEIST: Ah! Now we're getting somewhere! You're talking about me!

BELIEVER: Since when are you God?

ATHEIST: Since just a bit more than an infinite length of time. Of course, I created youjust three minutes ago.

BELIEVER: That's crazy! I'm fifty-seven years old!

ATHEIST: Of course you think you are: I created those memories in you, and I altered everyone else's memories also, to make it appear that you were around before three minutes ago.

BELIEVER: I suppose you created my birth certificate too! What evidence do you have to support such an absurd idea?

ATHEIST: Ah! So you're beginning to understand that the burden of proof is on the person who makes the claim of a god's existence. Don't you think you should try to disprove the claim that I am god?

BELIEVER: Well, maybe. If you're god, why don't you perform a miracle?

ATHEIST: Good question. Unfortunately, I don't do miracles anymore. I could if I wanted to, but I've decided that from now on, people have to believe in me through faith. Being God, I've just now read your mind and I see you're thinking that you might be able to torture me into confessing that I'm not God. Well, scrap that idea! I might very well decide to pretend to be in pain and confess all sorts of silly things. But believe me, I would punish you for eternity after you die!

BELIEVER: Hey, that's not a legitimate argumentation. There's nothing I could ever do to disprove your claim of divinity. You could always wriggle out of it by claiming you'll show me after I'm dead!

ATHEIST: Very true! You're learning how impossible it is to prove a negative. But you're learning one even more important lesson.

BELIEVER: What's that?

ATHEIST: You're learning that it is stupid to argue about propositions that can't be tested even in the imagination. For every test you could imagine to try, I could come up with a way to evade your net—in just the same way as the preachers tell me yourgod doesn't want to get involved in mytests. My claim to divinity can't be tested. Your claims of the divinity of Jehovah or Jesus can't be tested either. If I call upon your god to strike me with lightning if I'm wrong, I guarantee nothing will happen. Your god won't get involved any more than I will. Claims that can't be tested even in the imagination are meaningless. They can't even be false. We don't need to waste our time trying to disprove them. You aren't going to waste your time trying to disprove my claim to divinity, and no sane person will waste time trying to disprove the existence of your untestable god. Of course, when you accidentally make a claim about your divinity nominee that is testable, sane people might take the time to show you how the test results turn out to be negative. But in general, no one is going to waste time trying to prove that Jehovah and I are not gods.

SPIRIT, SOUL, AND MIND

Whenever I peruse a dictionary, I am struck by the amazing number of words which refer to nothing at all in the real world. Many of the words are obviously fabulous: leprechaun, unicorn, gremlin, Philosopher's Stone, Zeus, elf, Fountain of Youth, ghost, etc. Others, though referring equally to non-existent things, are less obviously fabulous: The Mean Sun, The Average Citizen, vital force, spirit, soul, and —in at least some of its accepted meanings —mind.

Why the human species has invented so many words which refer to nothing in reality is a most interesting question for scientific investigation, and it probably would require a complete book to elucidate properly. In this article I shall only attempt to deal with a few such words, specifically, the words spirit, soul, and mind.

It is a striking fact that nearly all languages of the world, extinct as well as extant, have —or have had —words which could be rendered as 'spirit' or 'soul' in English. At first glance, it would seem that this is a good argument in favor of the real existence of souls and spirits. For, would it not be improbable that so many different peoples and languages could be mistaken? If many different unrelated languages have independently invented words for soul, is that not a good reason to believe they did so because there really is such a thing?

I think not. The first clue to the solution of this puzzle comes from etymology, the study of word origins.

While the origin of the English word soul is obscure, the word almost certainly had its origin in a word which meant 'breath' or 'wind' or 'air', or something like that. The word spirit--generally a synonym for soul--comes from the Latin spiritus, and clearly meant 'breath' originally. Spiritual and respiratory both derive from the same root!

Moreover, if we check in the Greek and Hebrew bibles to see which words are translated as 'soul', etc., in the King James Version, we will find many whose literal meaning is 'breath' or 'wind'. For example, the Hebrew word neshamah (literally meaning 'breath') is twice rendered as 'spirit', once as 'soul'. The Hebrew-Aramaic word ruach (lit., 'wind') is rendered 240 times as 'spirit', six times as 'mind.' The word nephesh (lit., 'breath') is rendered 'soul' 428 times, 'mind' 15 times, 'ghost' twice, and 'life' 119 times.

Turning to the Greek Bible, we find pneuma (lit., 'breath') rendered as 'ghost' 91 times (including the rendering 'Holy Ghost'), 292 times as 'spirit'. The reader will recognize the same root in the word pneumonia, a word referring to a disease of the organs of breath. And finally, in this somewhat pedantic parade of words, we may note the important word psyche. As expected, its literal meaning is 'breath.' As we might have guessed, it is rendered as 'soul' 58 times, 'mind' three times, and 'life' 40 times.

The fact that nearly all words now meaning 'soul', 'spirit', 'life', etc., trace their origins to words meaning 'breath' or 'wind' leads me to conclude that the derived meanings were an outgrowth of the inability of primitive people to solve a basic biological puzzle, namely, what constitutes the difference between a live body and a dead one?

To the ancient authors of the Bible—men who still thought they were living on a flat earth beneath a solid sky (firmament)--the solution seemed deceptively simple: living things breathe, dead things do not. At first, only animals (from Latin anima, meaning 'breath' or 'breeze' originally) were considered fully alive. The case of plants was viewed with confusion for a long time. Some authorities considered them live, others did not. The ancients did not realize that 'souls' were really only a gaseous mixture of nitrogen and oxygen, contaminated with varying amounts of water vapor, carbon dioxide, noble gases, and—depending upon what one ate and whether or not one brushed after every meal—varying amounts of aromatic substances!

In the Genesis Second Creation Myth, the animating power of breath is clearly depicted. Yahweh, after having molded Adam from the dust, has to breathe into him the breath of life in order for him to become a living soul. Breath is life.

The manner in which breath became equated with life is not difficult to discern. A person newly dead, say, of a heart attack, anatomically is not much different from what he was like before he died. He still has five fingers per hand, a tongue in his mouth, a brain in his head, and a heart in his breast. The ancients, unconscious of the microcosmic fever of chemical marriages and divorces that we call metabolism, could see only one obvious difference: the lack of breath in the dead.

When a man expired (lit., 'breathed out'), his spirit (lit., 'breath') left his body, and he died. When a man sneezed, his spirit was forcefully ejected from his body, and one had to say "God bless you" or make a magical gesture, such as the sign of the cross, very quickly, before evil spirits could come to take over the momentarily spiritually vacant carcass. Demonic "possession" was the result, quite simply, of inhaling one or more of the evil breaths thought to hover in the air around us. For early Christians, the Devil's breath was everywhere.

Of course, not all possession was necessarily evil. People could "inspired"—that is, the breath of a god could take over their bodies to deliver words of wisdom or apocalyptic admonitions. Indeed, the origin of the Christian church itself was thought to have originated in an act of mass possession by a Holy Ghost ("Holy Breath" in Greek text!). In Acts 4:31 we read that when the Apostles and others "had ended their prayer, the building where they were assembled rocked, and all were filled with the Holy Spirit [breath] and spoke the word of God with boldness." (Given the close association of words with breath—thought to be life itself—is it any wonder that religions of all kinds have always focused on the magical significance of words?)

Lest anyone still think the link between breath and the foundations of Christianity be doubtful, attention is drawn to the tale running through John 20:22. Jesus has come back to visit the Disciples to tell them that he is sending them out to forgive or not forgive the sins of the world. "Then he [Jesus] breathed on them, saying, 'Receive the Holy Spirit!'" Right from the beginning, Christianity was based upon warm breath—which in time became hot air.

Modern biologists, unlike the ancient makers of myths, know that all the phenomena of living systems can be reduced to physical and chemical terms. They have no evidence of any 'vital force' or mystical spirit—and no need to seek for such. They recognize the fully alive body and the newly dead body to be but two arbitrary points along a continuum of decreasing organization.

So much for spirit, soul, and ghost. Originally denoting breath or wind, they are words which have acquired a host of mystical connotations as pre scientific people attempted to account for the difference between life and death. But what of the word mind? Does it refer to anything real? Or is it, too, a fabulous entity?

Unlike the analysis of spirit and soul, the analysis of mind is not at all simple. This is so largely through the grammatical accident that in all the European languages, ancient as well as modern, the word mind is a noun.

We tend to think of nouns as substantive: table, chair, and plumb-bob are all nouns, and all are substantial. There are many words, however, which though grammatically nouns, are not at all substantial. Words like beauty, truth, and velocity would be examples. Unfortunately, our thinking tends to be hedged around by the grammar and hidden assumptions of the language with which we think. And so it happens again and again that abstract nouns come to be thought of as representing things just as substantial as those represented by common nouns. And thus we have the basic confusion necessary to found philosophical systems such as Plato's—whose perfect triangularity exists in triangle-heaven, and so on.

Because mind was a noun, it was conceived to be a thing. Because it was thought to be a thing, it was thought to have existence apart from the brain. Because it has independent existence, it was thought capable of survival after the death of the body. And millions thought that to be good reason to invest millions in that greatest of all businesses, religion.

Neurobiological studies show all these ideas to be quite worthless. Mind is a process, a dynamic relation, and not a thing. If we change the processes of the brain, we change the mind. The psychedelic drugs have taught us that fact, if nothing else. The history of western philosophy and religion, as well as science, would have been quite different if the word mind had developed as a verb instead of as a noun.

To wonder where the mind goes after the brain decays is as silly as asking where the 70-miles-per-hour have gone after a speeding auto has crashed into a tree. Just as the relative motion of an auto can be altered only within certain limits and still represent the process called "speeding," so too we can alter the functioning of the brain only so much before the process called "mind" or "thinking" becomes altered out of existence.

Now that scientists recognize mind as a process rather than a thing, they are making rapid advances in understanding the specific brain dynamics that correspond to the various subjective states collectively known as mind. Certain drugs are known, for example, that affect certain neural paths and centers in the brain to produce the psychic state known as euphoria. Others affect other circuits and produce depression or sleep. We can implant electrodes in the brain and cause the subject to ''hear'' bells and symphonies that aren't "there" at all. We can be made to "see" figures and lights without using our eyes at all, by stimulating the visual cortex at the back of the brain.

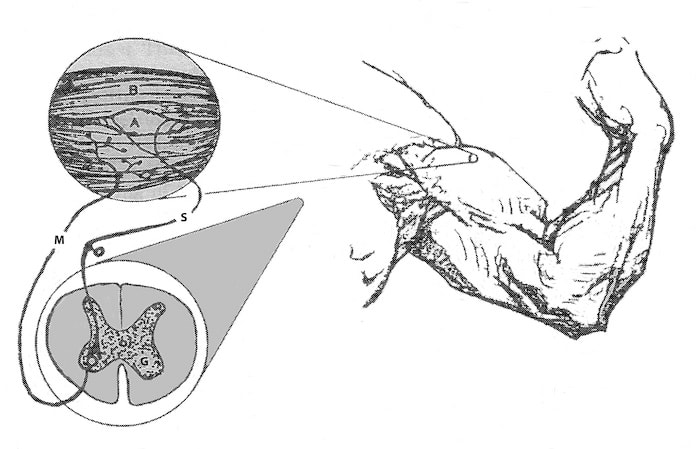

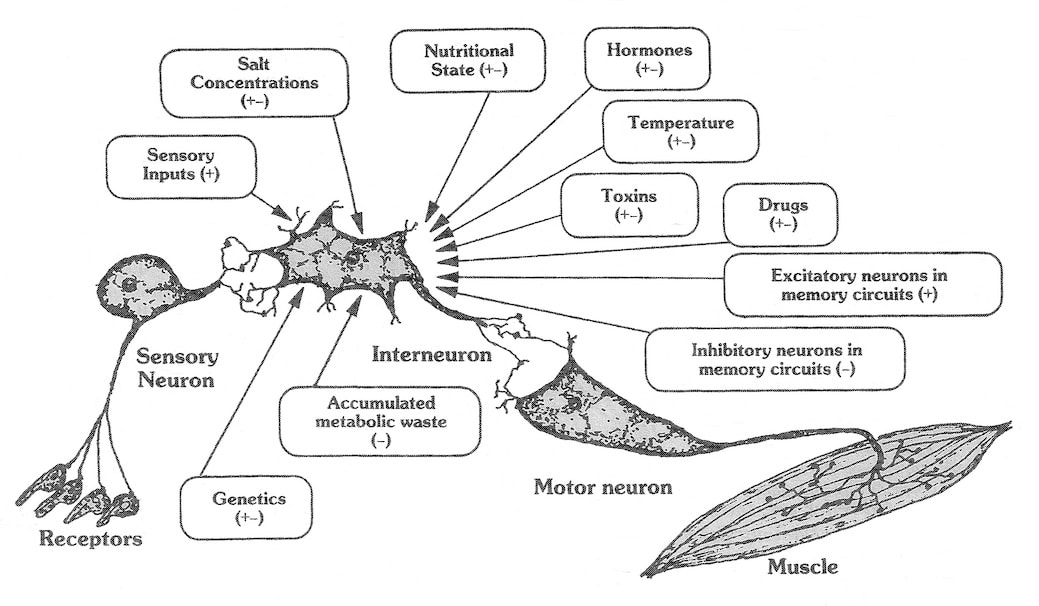

We can cause to appear the emotions of rage, sexuality, sorrow, religious awe, etc., by altering the dynamic functions of the brain in appropriate ways. We are beginning to understand how neural circuits compete with each other to give us the illusion of "free will." Indeed, we are on the verge of being able to write equations relating the physicochemical states of the nervous system with the subjective, mental states described by psychologists and other mystics. In short, we are learning to study subjective states objectively.

Whether or not we shall be any more responsible in the application of this new knowledge than we were in the application of fire, dynamite, and atomic energy remains to be seen. Even the un-average person plays ill the part of Prometheus. Unless we, collectively the new Prometheus, judge wisely what to do with our new psychobiological powers, like Prometheus we may find ourselves chained to rocks, our vitals torn by eagles. Or worse.

Why the human species has invented so many words which refer to nothing in reality is a most interesting question for scientific investigation, and it probably would require a complete book to elucidate properly. In this article I shall only attempt to deal with a few such words, specifically, the words spirit, soul, and mind.

It is a striking fact that nearly all languages of the world, extinct as well as extant, have —or have had —words which could be rendered as 'spirit' or 'soul' in English. At first glance, it would seem that this is a good argument in favor of the real existence of souls and spirits. For, would it not be improbable that so many different peoples and languages could be mistaken? If many different unrelated languages have independently invented words for soul, is that not a good reason to believe they did so because there really is such a thing?

I think not. The first clue to the solution of this puzzle comes from etymology, the study of word origins.

While the origin of the English word soul is obscure, the word almost certainly had its origin in a word which meant 'breath' or 'wind' or 'air', or something like that. The word spirit--generally a synonym for soul--comes from the Latin spiritus, and clearly meant 'breath' originally. Spiritual and respiratory both derive from the same root!

Moreover, if we check in the Greek and Hebrew bibles to see which words are translated as 'soul', etc., in the King James Version, we will find many whose literal meaning is 'breath' or 'wind'. For example, the Hebrew word neshamah (literally meaning 'breath') is twice rendered as 'spirit', once as 'soul'. The Hebrew-Aramaic word ruach (lit., 'wind') is rendered 240 times as 'spirit', six times as 'mind.' The word nephesh (lit., 'breath') is rendered 'soul' 428 times, 'mind' 15 times, 'ghost' twice, and 'life' 119 times.

Turning to the Greek Bible, we find pneuma (lit., 'breath') rendered as 'ghost' 91 times (including the rendering 'Holy Ghost'), 292 times as 'spirit'. The reader will recognize the same root in the word pneumonia, a word referring to a disease of the organs of breath. And finally, in this somewhat pedantic parade of words, we may note the important word psyche. As expected, its literal meaning is 'breath.' As we might have guessed, it is rendered as 'soul' 58 times, 'mind' three times, and 'life' 40 times.

The fact that nearly all words now meaning 'soul', 'spirit', 'life', etc., trace their origins to words meaning 'breath' or 'wind' leads me to conclude that the derived meanings were an outgrowth of the inability of primitive people to solve a basic biological puzzle, namely, what constitutes the difference between a live body and a dead one?

To the ancient authors of the Bible—men who still thought they were living on a flat earth beneath a solid sky (firmament)--the solution seemed deceptively simple: living things breathe, dead things do not. At first, only animals (from Latin anima, meaning 'breath' or 'breeze' originally) were considered fully alive. The case of plants was viewed with confusion for a long time. Some authorities considered them live, others did not. The ancients did not realize that 'souls' were really only a gaseous mixture of nitrogen and oxygen, contaminated with varying amounts of water vapor, carbon dioxide, noble gases, and—depending upon what one ate and whether or not one brushed after every meal—varying amounts of aromatic substances!

In the Genesis Second Creation Myth, the animating power of breath is clearly depicted. Yahweh, after having molded Adam from the dust, has to breathe into him the breath of life in order for him to become a living soul. Breath is life.

The manner in which breath became equated with life is not difficult to discern. A person newly dead, say, of a heart attack, anatomically is not much different from what he was like before he died. He still has five fingers per hand, a tongue in his mouth, a brain in his head, and a heart in his breast. The ancients, unconscious of the microcosmic fever of chemical marriages and divorces that we call metabolism, could see only one obvious difference: the lack of breath in the dead.

When a man expired (lit., 'breathed out'), his spirit (lit., 'breath') left his body, and he died. When a man sneezed, his spirit was forcefully ejected from his body, and one had to say "God bless you" or make a magical gesture, such as the sign of the cross, very quickly, before evil spirits could come to take over the momentarily spiritually vacant carcass. Demonic "possession" was the result, quite simply, of inhaling one or more of the evil breaths thought to hover in the air around us. For early Christians, the Devil's breath was everywhere.

Of course, not all possession was necessarily evil. People could "inspired"—that is, the breath of a god could take over their bodies to deliver words of wisdom or apocalyptic admonitions. Indeed, the origin of the Christian church itself was thought to have originated in an act of mass possession by a Holy Ghost ("Holy Breath" in Greek text!). In Acts 4:31 we read that when the Apostles and others "had ended their prayer, the building where they were assembled rocked, and all were filled with the Holy Spirit [breath] and spoke the word of God with boldness." (Given the close association of words with breath—thought to be life itself—is it any wonder that religions of all kinds have always focused on the magical significance of words?)

Lest anyone still think the link between breath and the foundations of Christianity be doubtful, attention is drawn to the tale running through John 20:22. Jesus has come back to visit the Disciples to tell them that he is sending them out to forgive or not forgive the sins of the world. "Then he [Jesus] breathed on them, saying, 'Receive the Holy Spirit!'" Right from the beginning, Christianity was based upon warm breath—which in time became hot air.

Modern biologists, unlike the ancient makers of myths, know that all the phenomena of living systems can be reduced to physical and chemical terms. They have no evidence of any 'vital force' or mystical spirit—and no need to seek for such. They recognize the fully alive body and the newly dead body to be but two arbitrary points along a continuum of decreasing organization.

So much for spirit, soul, and ghost. Originally denoting breath or wind, they are words which have acquired a host of mystical connotations as pre scientific people attempted to account for the difference between life and death. But what of the word mind? Does it refer to anything real? Or is it, too, a fabulous entity?

Unlike the analysis of spirit and soul, the analysis of mind is not at all simple. This is so largely through the grammatical accident that in all the European languages, ancient as well as modern, the word mind is a noun.

We tend to think of nouns as substantive: table, chair, and plumb-bob are all nouns, and all are substantial. There are many words, however, which though grammatically nouns, are not at all substantial. Words like beauty, truth, and velocity would be examples. Unfortunately, our thinking tends to be hedged around by the grammar and hidden assumptions of the language with which we think. And so it happens again and again that abstract nouns come to be thought of as representing things just as substantial as those represented by common nouns. And thus we have the basic confusion necessary to found philosophical systems such as Plato's—whose perfect triangularity exists in triangle-heaven, and so on.

Because mind was a noun, it was conceived to be a thing. Because it was thought to be a thing, it was thought to have existence apart from the brain. Because it has independent existence, it was thought capable of survival after the death of the body. And millions thought that to be good reason to invest millions in that greatest of all businesses, religion.

Neurobiological studies show all these ideas to be quite worthless. Mind is a process, a dynamic relation, and not a thing. If we change the processes of the brain, we change the mind. The psychedelic drugs have taught us that fact, if nothing else. The history of western philosophy and religion, as well as science, would have been quite different if the word mind had developed as a verb instead of as a noun.

To wonder where the mind goes after the brain decays is as silly as asking where the 70-miles-per-hour have gone after a speeding auto has crashed into a tree. Just as the relative motion of an auto can be altered only within certain limits and still represent the process called "speeding," so too we can alter the functioning of the brain only so much before the process called "mind" or "thinking" becomes altered out of existence.

Now that scientists recognize mind as a process rather than a thing, they are making rapid advances in understanding the specific brain dynamics that correspond to the various subjective states collectively known as mind. Certain drugs are known, for example, that affect certain neural paths and centers in the brain to produce the psychic state known as euphoria. Others affect other circuits and produce depression or sleep. We can implant electrodes in the brain and cause the subject to ''hear'' bells and symphonies that aren't "there" at all. We can be made to "see" figures and lights without using our eyes at all, by stimulating the visual cortex at the back of the brain.

We can cause to appear the emotions of rage, sexuality, sorrow, religious awe, etc., by altering the dynamic functions of the brain in appropriate ways. We are beginning to understand how neural circuits compete with each other to give us the illusion of "free will." Indeed, we are on the verge of being able to write equations relating the physicochemical states of the nervous system with the subjective, mental states described by psychologists and other mystics. In short, we are learning to study subjective states objectively.

Whether or not we shall be any more responsible in the application of this new knowledge than we were in the application of fire, dynamite, and atomic energy remains to be seen. Even the un-average person plays ill the part of Prometheus. Unless we, collectively the new Prometheus, judge wisely what to do with our new psychobiological powers, like Prometheus we may find ourselves chained to rocks, our vitals torn by eagles. Or worse.

ETHICS WITHOUT GODS

Introduction

One of the first questions Atheists are asked by true believers and doubters alike is, "If you don't believe in God, there's nothing to prevent you from committing crimes, is there? Without the fear of hell-fire and eternal damnation, you can do anything you like, can't you?"

It is hard to believe that even intelligent and educated people could hold such an opinion, but they do. It seems never to have occurred to them that the Greeks and Romans, whose gods and goddesses were something less than paragons of virtue, nevertheless led lives not obviously worse than those of the Baptists of Alabama! Moreover, pagans such as Aristotle and Marcus Aurelius—although their systems may not be suitable for us today—managed to produce ethical treatises of great sophistication, a sophistication rarely if ever equaled by Christian moralists.

The answer to the questions posed above is, of course, "Absolutely not!" The behavior of Atheists is subject to the same rules of sociology, psychology, and neurophysiology that govern the behavior of all members of our species—religionists included. Moreover, despite protestations to the contrary, we may assert as a general rule that when religionists practice ethical behavior, it isn't really due to their fear of hell-fire and damnation, nor is it due to their hopes of heaven. Ethical behavior—regardless of who the practitioner may be—results always from the same causes and is regulated by the same forces, and has nothing to do with the presence or absence of religious belief. The nature of these causes and forces is the subject of this essay.

It is hard to believe that even intelligent and educated people could hold such an opinion, but they do. It seems never to have occurred to them that the Greeks and Romans, whose gods and goddesses were something less than paragons of virtue, nevertheless led lives not obviously worse than those of the Baptists of Alabama! Moreover, pagans such as Aristotle and Marcus Aurelius—although their systems may not be suitable for us today—managed to produce ethical treatises of great sophistication, a sophistication rarely if ever equaled by Christian moralists.

The answer to the questions posed above is, of course, "Absolutely not!" The behavior of Atheists is subject to the same rules of sociology, psychology, and neurophysiology that govern the behavior of all members of our species—religionists included. Moreover, despite protestations to the contrary, we may assert as a general rule that when religionists practice ethical behavior, it isn't really due to their fear of hell-fire and damnation, nor is it due to their hopes of heaven. Ethical behavior—regardless of who the practitioner may be—results always from the same causes and is regulated by the same forces, and has nothing to do with the presence or absence of religious belief. The nature of these causes and forces is the subject of this essay.

Psychobiological Foundations

As human beings, we are social animals. Our sociality is the result of evolution, not choice. Natural selection has equipped us with nervous systems which are peculiarly sensitive to the emotional status of our fellows. Among our kind, emotions are contagious, and it is only the rare psychopathic mutants among us who can be happy in the midst of a sad society. It is in our nature to be happy in the midst of happiness, sad in the midst of sadness. It is in our nature, fortunately, to seek happiness for our fellows at the same time as we seek it for ourselves. Our happiness is greater when it is shared.

Nature also has provided us with nervous systems which are, to a considerable degree, imprintable. To be sure, this phenomenon is not as pronounced or as ineluctable as it is, say, in geese—where a newly hatched gosling can be "imprinted" to a toy train and will follow it to exhaustion, as if it were its mother. Nevertheless, some degree of imprinting is exhibited by humans. The human nervous system appears to retain its capacity for imprinting well into old age, and it is highly likely that the phenomenon known as "love-at-first-sight" is a form of imprinting. Imprinting is a form of attachment behavior, and it helps us to form strong interpersonal bonds. It is a major force which helps us to break through the ego barrier to create "significant others" whom we can love as much as ourselves. These two characteristics of our nervous system—emotional suggestibility and attachment imprintability—although they are the foundation of all altruistic behavior and art, are thoroughly compatible with the selfishness characteristic of all behaviors created by the process of natural selection. That is to say, to a large extent behaviors which satisfy ourselves will be found, simultaneously, to satisfy our fellows, and vice-versa.

This should not surprise us when we consider that among the societies of our nearest primate cousins, the great apes, social behavior is not chaotic, even if gorillas do lack the Ten Commandments! The young chimpanzee does not need an oracle to tell it to honor its mother and to refrain from killing its brothers and sisters. Of course, family squabbles and even murder have been observed in ape societies, but such behaviors are exceptions, not the norm. So too it is in human societies, everywhere and at all times.

The African apes—whose genes are ninety-eight to ninety-nine percent identical to ours‑go about their lives as social animals, cooperating in the living of life, entirely without the benefit of clergy and without the commandments of Exodus, Leviticus, or Deuteronomy. It is further cheering to learn that sociobiologists have even observed altruistic behavior among troops of baboons. More than once—in troops attacked by leopard—aged, post reproduction-age males have been observed to linger at the rear of the escaping troop and to engage the leopard in what often amounts to a suicidal fight. As the old male delays the leopard's pursuit by sacrificing his very life, the females and young escape and live to fulfill their several destinies. The heroism which we see acted out, from time to time, by our fellow men and women, is far older than their religions. Long before the gods were created by the fear-filled minds of our less courageous ancestors, heroism and acts of self-sacrificing love existed. They did not require a supernatural excuse then, nor do they require one now.

Given the general fact, then, that evolution has equipped us with nervous systems biased in favor of social, rather than antisocial, behaviors, is it not true, nevertheless, that antisocial behavior does exist, and it exists in amounts greater than a reasonable ethicist would find tolerable? Alas, this is true. But it is true largely because we live in worlds far more complex than the Paleolithic world in which our nervous systems originated. To understand the ethical significance of this fact, we must digress a bit and review the evolutionary history of human behavior.

Nature also has provided us with nervous systems which are, to a considerable degree, imprintable. To be sure, this phenomenon is not as pronounced or as ineluctable as it is, say, in geese—where a newly hatched gosling can be "imprinted" to a toy train and will follow it to exhaustion, as if it were its mother. Nevertheless, some degree of imprinting is exhibited by humans. The human nervous system appears to retain its capacity for imprinting well into old age, and it is highly likely that the phenomenon known as "love-at-first-sight" is a form of imprinting. Imprinting is a form of attachment behavior, and it helps us to form strong interpersonal bonds. It is a major force which helps us to break through the ego barrier to create "significant others" whom we can love as much as ourselves. These two characteristics of our nervous system—emotional suggestibility and attachment imprintability—although they are the foundation of all altruistic behavior and art, are thoroughly compatible with the selfishness characteristic of all behaviors created by the process of natural selection. That is to say, to a large extent behaviors which satisfy ourselves will be found, simultaneously, to satisfy our fellows, and vice-versa.

This should not surprise us when we consider that among the societies of our nearest primate cousins, the great apes, social behavior is not chaotic, even if gorillas do lack the Ten Commandments! The young chimpanzee does not need an oracle to tell it to honor its mother and to refrain from killing its brothers and sisters. Of course, family squabbles and even murder have been observed in ape societies, but such behaviors are exceptions, not the norm. So too it is in human societies, everywhere and at all times.

The African apes—whose genes are ninety-eight to ninety-nine percent identical to ours‑go about their lives as social animals, cooperating in the living of life, entirely without the benefit of clergy and without the commandments of Exodus, Leviticus, or Deuteronomy. It is further cheering to learn that sociobiologists have even observed altruistic behavior among troops of baboons. More than once—in troops attacked by leopard—aged, post reproduction-age males have been observed to linger at the rear of the escaping troop and to engage the leopard in what often amounts to a suicidal fight. As the old male delays the leopard's pursuit by sacrificing his very life, the females and young escape and live to fulfill their several destinies. The heroism which we see acted out, from time to time, by our fellow men and women, is far older than their religions. Long before the gods were created by the fear-filled minds of our less courageous ancestors, heroism and acts of self-sacrificing love existed. They did not require a supernatural excuse then, nor do they require one now.

Given the general fact, then, that evolution has equipped us with nervous systems biased in favor of social, rather than antisocial, behaviors, is it not true, nevertheless, that antisocial behavior does exist, and it exists in amounts greater than a reasonable ethicist would find tolerable? Alas, this is true. But it is true largely because we live in worlds far more complex than the Paleolithic world in which our nervous systems originated. To understand the ethical significance of this fact, we must digress a bit and review the evolutionary history of human behavior.

A Digression

Today, heredity can control our behavior in only the most general of ways, it cannot dictate precise behaviors appropriate for infinitely varied circumstances. In our world, heredity needs help.

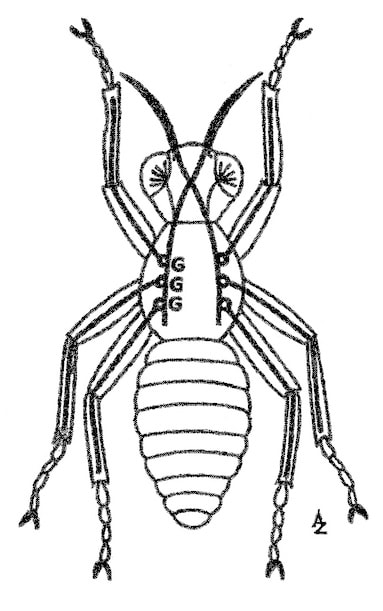

In the world of a fruit fly, by contrast, the problems to be solved are few in number and highly predictable in nature. Consequently, a fruit fly's brain is largely "hard-wired" by heredity. That is to say, most behaviors result from environmental activation of nerve circuits which are formed automatically by the time of emergence of the adult fly. This is an extreme example of what is called instinctual behavior. Each behavior is coded for by a gene or genes which predispose the nervous system to develop certain types of circuits and not others, and where it is all but impossible to act contrary to the genetically predetermined script.

The world of a mammal—say a fox—is much more complex and unpredictable than that of the fruit fly. Consequently, the fox is born with only a portion of its neuronal circuitry hard-wired. Many of its neurons remain "plastic" throughout life. That is, they may or may not hook up with each other in functional circuits, depending upon environmental circumstances. Learned behavior is behavior which results from activation of these environmentally conditioned circuits. Learning allows the individual mammal to learn—by trial and error—greater numbers of adaptive behaviors than could be transmitted by heredity. A fox would be wall-to-wall genes if all its behaviors were specified genetically.

With the evolution of humans, however, environmental complexity increased out of all proportion to the genetic and neuronal changes distinguishing us from our simian ancestors. This partly was due to the fact that our species evolved in a geologic period of great climatic flux —the Ice Ages—and partly was due to the fact that our behaviors themselves began to change our environment. The changed environment in turn created new problems to be solved. Their solutions further changed the environment, and so on. Thus, the discovery of fire led to the burning of trees and forests, which led to destruction of local water supplies and watersheds, which led to the development of architecture with which to build aqueducts, which led to laws concerning water-rights, which led to international strife, and on and on.

Given such complexity, even the ability to learn new behaviors is, by itself, inadequate. If trial and error were the only means, most people would die of old age before they would succeed in rediscovering fire or reinventing the wheel. As a substitute for instinct and to increase the efficiency of learning, mankind developed culture. The ability to teach—as well as to learn—evolved, and trial-and-error learning became a method of last resort.

By transmission of culture—passing on the sum total of the learned behaviors common to a population—we can do what Darwinian genetic selection would not allow: we can inherit acquired characteristics. The wheel once having been invented, its manufacture and use can be passed down through the generations. Culture can adapt to change much faster than genes can, and this provides for finely tuned responses to environmental disturbances and upheavals. By means of cultural transmission, those behaviors which have proven useful in the past can be taught quickly to the young, so that adaptation to life—say on the Greenland ice cap—can be assured.

Even so, cultural transmission tends to be rigid: it took over one hundred thousand years to advance to chipping both sides of the hand-ax! Cultural mutations, like genetic mutations, tend more often than not to be harmful, and both are resisted—the former by cultural conservatism, the latter by natural selection. But changes do creep in faster than the rate of genetic change, and cultures slowly evolve. Even that cultural dinosaur known as the Catholic Church—despite its claim to be the unchanging repository of truth and "correct" behavior—has changed greatly since its beginning.

Incidentally, it is at this hand-ax stage of cultural evolution at which most of the religions of today are still stuck. Our inflexible, absolutist moral codes also are fixated at this stage. The Ten Commandments are the moral counterpart of the "here's-how-you-rub-the-sticks-together" phase of technological evolution. If the only type of fire you want is one to heat your cave and cook your clams, the stick-rubbing method suffices. But if you want a fire to propel your jet-plane, some changes have to be made.

So, too, with the transmission of moral behavior. If we are to live lives which are as complex socially as jet-planes are complex technologically, we need something more than the Ten Commandments. We cannot base our moral code upon arbitrary and capricious fiats reported to us by persons claiming to be privy to the intentions of the denizens of Sinai or Olympus. Our ethics can be based neither upon fictions concerning the nature of humankind nor upon fake reports concerning the desires of the deities. Our ethics must be firmly planted in the soil of scientific self-knowledge. They must be improvable and adaptable.

Where then, and with what, shall we begin?

In the world of a fruit fly, by contrast, the problems to be solved are few in number and highly predictable in nature. Consequently, a fruit fly's brain is largely "hard-wired" by heredity. That is to say, most behaviors result from environmental activation of nerve circuits which are formed automatically by the time of emergence of the adult fly. This is an extreme example of what is called instinctual behavior. Each behavior is coded for by a gene or genes which predispose the nervous system to develop certain types of circuits and not others, and where it is all but impossible to act contrary to the genetically predetermined script.

The world of a mammal—say a fox—is much more complex and unpredictable than that of the fruit fly. Consequently, the fox is born with only a portion of its neuronal circuitry hard-wired. Many of its neurons remain "plastic" throughout life. That is, they may or may not hook up with each other in functional circuits, depending upon environmental circumstances. Learned behavior is behavior which results from activation of these environmentally conditioned circuits. Learning allows the individual mammal to learn—by trial and error—greater numbers of adaptive behaviors than could be transmitted by heredity. A fox would be wall-to-wall genes if all its behaviors were specified genetically.

With the evolution of humans, however, environmental complexity increased out of all proportion to the genetic and neuronal changes distinguishing us from our simian ancestors. This partly was due to the fact that our species evolved in a geologic period of great climatic flux —the Ice Ages—and partly was due to the fact that our behaviors themselves began to change our environment. The changed environment in turn created new problems to be solved. Their solutions further changed the environment, and so on. Thus, the discovery of fire led to the burning of trees and forests, which led to destruction of local water supplies and watersheds, which led to the development of architecture with which to build aqueducts, which led to laws concerning water-rights, which led to international strife, and on and on.

Given such complexity, even the ability to learn new behaviors is, by itself, inadequate. If trial and error were the only means, most people would die of old age before they would succeed in rediscovering fire or reinventing the wheel. As a substitute for instinct and to increase the efficiency of learning, mankind developed culture. The ability to teach—as well as to learn—evolved, and trial-and-error learning became a method of last resort.

By transmission of culture—passing on the sum total of the learned behaviors common to a population—we can do what Darwinian genetic selection would not allow: we can inherit acquired characteristics. The wheel once having been invented, its manufacture and use can be passed down through the generations. Culture can adapt to change much faster than genes can, and this provides for finely tuned responses to environmental disturbances and upheavals. By means of cultural transmission, those behaviors which have proven useful in the past can be taught quickly to the young, so that adaptation to life—say on the Greenland ice cap—can be assured.

Even so, cultural transmission tends to be rigid: it took over one hundred thousand years to advance to chipping both sides of the hand-ax! Cultural mutations, like genetic mutations, tend more often than not to be harmful, and both are resisted—the former by cultural conservatism, the latter by natural selection. But changes do creep in faster than the rate of genetic change, and cultures slowly evolve. Even that cultural dinosaur known as the Catholic Church—despite its claim to be the unchanging repository of truth and "correct" behavior—has changed greatly since its beginning.

Incidentally, it is at this hand-ax stage of cultural evolution at which most of the religions of today are still stuck. Our inflexible, absolutist moral codes also are fixated at this stage. The Ten Commandments are the moral counterpart of the "here's-how-you-rub-the-sticks-together" phase of technological evolution. If the only type of fire you want is one to heat your cave and cook your clams, the stick-rubbing method suffices. But if you want a fire to propel your jet-plane, some changes have to be made.

So, too, with the transmission of moral behavior. If we are to live lives which are as complex socially as jet-planes are complex technologically, we need something more than the Ten Commandments. We cannot base our moral code upon arbitrary and capricious fiats reported to us by persons claiming to be privy to the intentions of the denizens of Sinai or Olympus. Our ethics can be based neither upon fictions concerning the nature of humankind nor upon fake reports concerning the desires of the deities. Our ethics must be firmly planted in the soil of scientific self-knowledge. They must be improvable and adaptable.

Where then, and with what, shall we begin?

Back to Ethics

Plato showed long ago, in his dialogue Euthyphro, that we cannot depend upon the moral fiats of a deity. Plato asked if the commandments of a god were "good" simply because a god had commanded them, or because the god recognized what was good and commanded the action accordingly. If something is good simply because a god has commanded it, anything could be considered good. There would be no way of predicting what in particular the god might desire next, and it would be entirely meaningless to assert that "God is good." Bashing babies with rocks would be just as likely to be "good" as would the principle "Love your enemies." (It would appear that the "goodness" of the god of the Christian “Old Testament” is entirely of this sort.)

On the other hand, if a god's commandments are based on a knowledge of the inherent goodness of an act, we are faced with the realization that there is a standard of goodness independent of the god and we must admit that he cannot be the source of morality. In our quest for the good, we can bypass the god and go to his source!

Given, then, that gods a priori cannot be the source of ethical principles, we must seek such principles in the world in which we have evolved. We must find the sublime in the mundane. What precept might we adopt?

The principle of "enlightened self-interest" is an excellent first approximation to an ethical principle which is both consistent with what we know of human nature and is relevant to the problems of life in a complex society. Let us examine this principle.

First we must distinguish between "enlightened" and "unenlightened" self-interest. Let's take an extreme example for illustration. Suppose you lived a totally selfish life of immediate gratification of every desire. Suppose that whenever someone else had something you wanted, you took it for yourself.

It wouldn't be long at all before everyone would be up in arms against you, and you would have to spend all your waking hours fending off reprisals. Depending upon how outrageous your activity had been, you might very well lose your life in an orgy of neighborly revenge. The life of total but unenlightened self-interest might be exciting and pleasant as long as it lasts—but it is not likely to last long.